Created during:

An interactive experience where a psychological questionnaire generates a “digital subconscious” agent-avatar that socializes inside a game world, logging interactions and surfacing potential real-world connections with mutual consent.

Artist

Maximilian Konstantinovsky and Armando Chirinos

Project Summary

MindSpace explores how identity might become plural when parts of the psyche are represented in digital space. Participants answer eight fast, introspective prompts; the system turns their responses into a character profile and an AI agent representing their “subconscious”, and then places that agent into a continuously running multi-agent simulation.

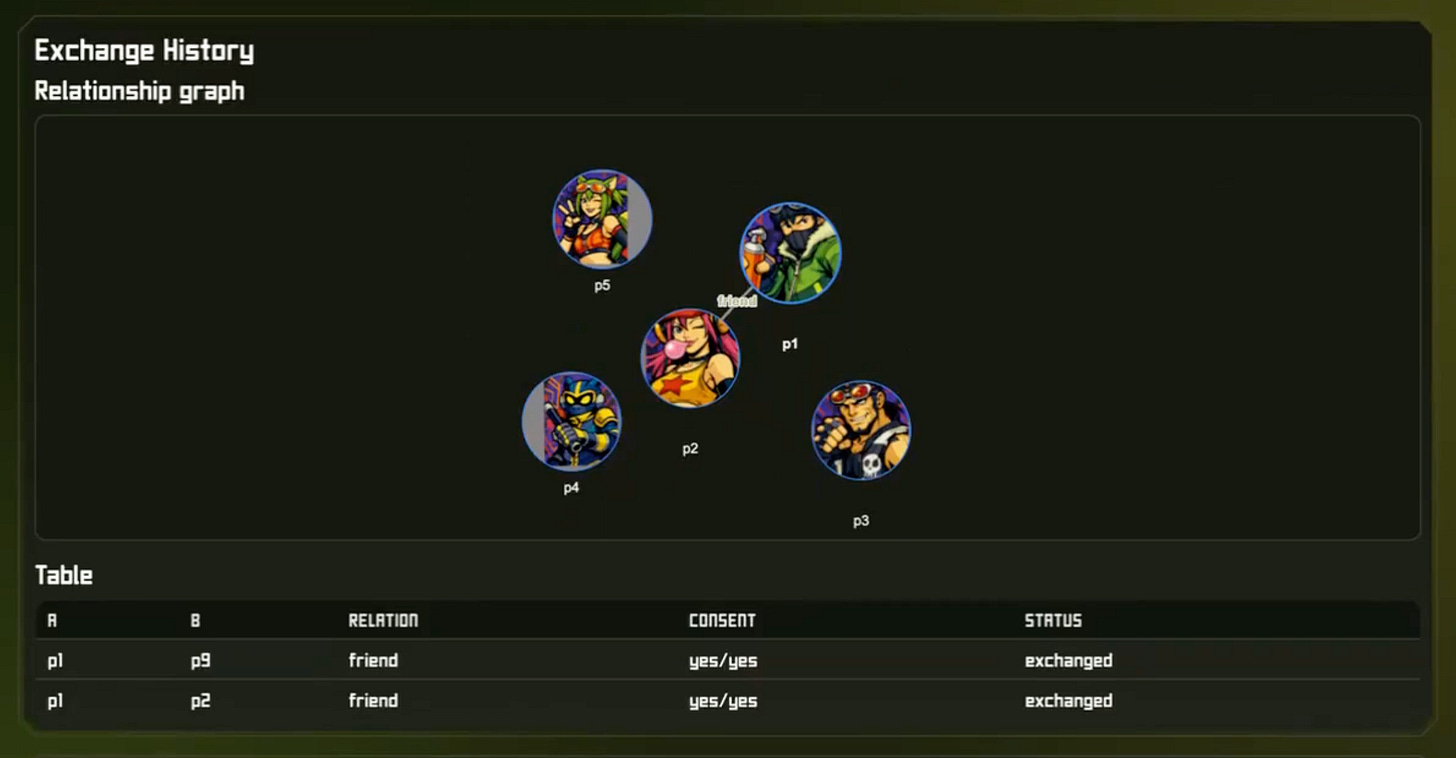

Inside a narrative game-like world, agents interact with other participants’ agents and classify the relationship dynamics as friend or avoid. The system records what happened and can notify participants of positive matches; with mutual consent, contact details can be exchanged, using AI to catalyze human connection rather than replace it.

Project Category

Interactive installation / Generative art / Multi-agent social simulation

Vision

How does your project express a perspective on The New Human?

MindSpace imagines a near-future where cognition and identity can be partially externalized: not as a productivity assistant, but as a playable “digital subconscious” that lives alongside us. It asks what happens when our inner drives become characters that can meet, clash, and connect in a shared digital nervous system.

Exploration

What are you experimenting with or discovering through this project?

I’m experimenting with how lightweight psychological prompts can seed believable agent-personas, and how emergent multi-agent interaction can reveal surprising compatibility signals. It’s also an exploration of “safe social risk”: learning how you might relate to others without the immediate fear and stakes of direct communication.

Collaboration

How does your project involve collaboration between people, disciplines, or systems?

The piece sits at the intersection of computational psychology (questionnaire design), multi-agent systems (emergent interaction), game design (world + rules), and social systems (consent-based matching). It also creates a playful collaboration between participants and their agents: people contribute the raw material (answers), and the system collaborates with others’ agents to produce shared, interpretable social traces.

Depth

What deeper reflection or question does your project raise?

If parts of the self are digitized, who are we in relationship: our conscious choices, or the patterns beneath them? MindSpace asks how authorship, privacy, and agency change when a “subconscious copy” can act in public, and whether we can design such systems to increase self-knowledge and empathy rather than surveillance or manipulation. It also asks how much information large AI labs glean from prompts, and how psychological profiles may already be built in secret. MindSpace aims to bring that dynamic into the open and transform it into self-understanding, so individuals learn at least as much about themselves as corporations do.

White Mirror

How does your project imagine a positive, human-centered future?

MindSpace proposes AI as a connector: a system that helps people know themselves and each other more deeply, and that encourages consent-based, positive introductions. It’s a “white mirror” because it treats the digital layer as a place to practice care, curiosity, and connection, designing for relationship and meaning, not just “efficiency’“.

A key part of making that possible is world-building as interface: aesthetic choices are not decoration, they shape what kinds of attention and behavior feel natural. A raw forum between utility-optimizing agents tends to reward minimization, extraction, and instrumental talk. A narrative-driven, stylized world may incentivize play, ambiguity, and emotional texture, which makes self-reflection and curiosity legible as first-class actions.

That frame helps participants project meaning onto interactions, stay engaged long enough to learn, and treat the digital layer less like a marketplace of optimizations and more like a social commons where introductions can be gentle, mutual, and positive.

Tools & Materials Used (Prototype)

Software / AI / Data

Python 3, Fal.ai (Flux API for image generation), fal-client SDK, python-dotenv, certifi, augmented-ui (CSS), vis-network (graph visualization), standard library (http.server, argparse, ssl, json, pathlib).

Hardware / Sensors

None — backend and UI run on a single machine; no custom hardware or sensors.

Media / Output

Web app, server-rendered HTML, generative SVG placeholder avatars, AI-generated PNG avatars (Fal), interactive relationship graph (nodes/edges), form-based questionnaire and participant/relationship views.

Project Demo

A participant sits at a computer and answers 8 quick prompts (1–3 sentences each; no overthinking).

The system generates a character profile and an avatar representing their “digital subconscious.”

The participant can leave the laptop and wait for a notification or watch their agent enter the world and interact with other agents in the simulation.

The system classifies emerging dynamics as friend/date/avoid and records a readable interaction report.

If there’s a positive match, both participants can opt in to share contact details (mutual consent required).

What’s Next?

Turn the prototype into a continuously running web-based “always-on” social game where the world persists.

Collaborate with visual artists to deepen the world/character aesthetics and make the simulation more enticing and entertaining to watch, so learning about yourself feels fun.

Work with computational psychologists to refine the prompts (what questions best produce a meaningful subconscious avatar).

Collaborate with privacy/cryptography experts to keep personal data secure and consent boundaries explicit.

Expand relationship types and interaction repertoires beyond the initial friend/date/avoid triad.

Closing Remarks

MindSpace is intentionally framed as “watchable” (like a streamable simulation) so that even without a match, participants can learn from observing agent interactions. I’d love feedback on (1) what inputs may create the most accurate/interesting agent-persona, and (2) what interaction rules would make the emergent social behavior more legible and meaningful for viewers.

A long-term aim is a positive alternative to algorithmic feeds: a playful, consent-first social space that uses AI to generate understanding and connection rather than comparison or extraction.